Learn

Best Video Streaming CDN in 2026? 7 Providers Compared With Real Performance Data

Best CDN for Video Streaming in 2026: 7 Providers Compared A single rebuffer event at the two-second mark costs you 8% ...

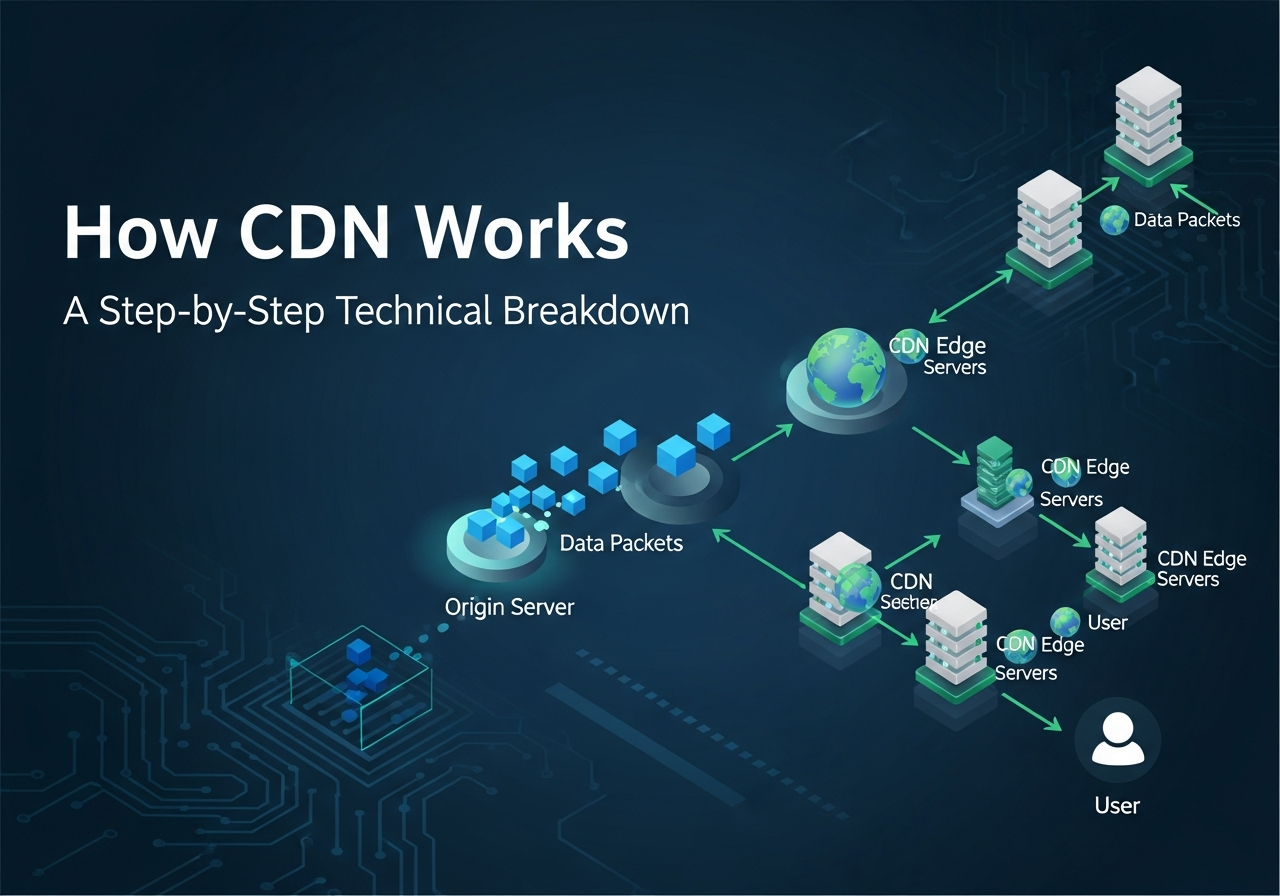

Take a user-to-origin path with 150 ms RTT. On a cold HTTPS connection, you burn roughly 3 RTTs on transport, crypto, and request-response turn-taking before the origin has done meaningful work. That is about 450 ms of network tax before app latency, queueing, shielding, or a single lost packet. This is the part of how CDN works that most architecture diagrams flatten into one arrow, and it is why adding more origin capacity rarely fixes global p95.

At scale, the failure mode is not just slow pages. It is tail amplification: cache misses stacking on shield, sudden origin fan-out during a purge, TLS handshakes moving CPU to the worst possible place, and long-haul congestion turning a small amount of packet loss into seconds of startup delay for video segments and downloads. The naive fix, bigger origins and shorter TTLs, usually increases the amount of expensive work happening furthest from the user.

If you want to understand how CDN works in production, start with round trips, not marketing diagrams. A cache hit is valuable because it removes distance and handshake cost from the critical path. A miss is expensive because it puts long-haul transport, origin concurrency, and cache-fill behavior back into play.

The protocol floor is straightforward. TCP consumes 1 RTT for connection setup per RFC 9293. TLS 1.3 adds 1 RTT for a full handshake per RFC 8446. Then the client sends the HTTP request and waits for response bytes, which is another RTT-scale turn unless you are on a resumed QUIC path with 0-RTT data and favorable replay semantics under RFC 9000.

| Path | Assumed RTT | Round trips before first byte | Network floor before origin work | Notes |

|---|---|---|---|---|

| HTTP/2 to distant origin | 150 ms | 3 | ~450 ms | TCP 1 RTT, TLS 1.3 1 RTT, request-response 1 RTT |

| HTTP/2 to nearby edge | 20 ms | 3 | ~60 ms | Same protocol cost, far shorter path |

| HTTP/3 to nearby edge, initial connection | 20 ms | 2 | ~40 ms | QUIC combines transport and crypto setup |

| HTTP/3 resumed connection | 20 ms | ~1 effective RTT | ~20 ms | Best case, subject to 0-RTT policy and request safety |

Those are floor values, not medians. Real systems pay extra for queueing, packet loss, anti-replay constraints, and application work. RIPE Atlas and APNIC Labs measurements published in 2025 continue to show the same broad pattern: regional RTTs are often sub-20 ms, while intercontinental paths regularly land well above 120 ms. That order-of-magnitude gap is the economic engine of the edge.

Now add bandwidth-delay product. A 100 Mbps transfer over a 150 ms RTT path needs roughly 1.875 MB in flight to fill the pipe. If congestion window growth, loss recovery, or receiver behavior keeps the sender below that, large object delivery underutilizes available bandwidth even when the origin is idle. That is why software installers, game patches, and large media objects benefit disproportionately from edge termination and warm cache.

Request hit ratio makes dashboards look healthy. Byte hit ratio is what protects origin egress and tail latency. A CDN serving 40 Gbps with a 95 percent byte hit ratio exposes only 2 Gbps to origin. At 80 percent byte hit ratio, the same workload pushes 8 Gbps back to origin. That 15-point delta is frequently the difference between a shield tier that looks elegant on paper and an origin fleet that melts during a purge or a viral asset launch.

| Total delivered traffic | Byte hit ratio | Origin egress required |

|---|---|---|

| 40 Gbps | 70% | 12 Gbps |

| 40 Gbps | 85% | 6 Gbps |

| 40 Gbps | 95% | 2 Gbps |

The practical implication is simple. When engineers ask how does a CDN work, the right answer is not “it caches content close to users.” The useful answer is “it removes long-haul RTT from hot paths, collapses duplicate misses, and converts byte reuse into origin protection.”

The request path is usually a decision tree, not a straight line. Whether the object is fresh, stale, partially cached, uncacheable, or key-fragmented determines both latency and origin load.

The client resolves the hostname and connects to the selected edge. Good mapping tries to minimize RTT without steering the request toward an edge that is operationally hot or likely to miss. This sounds obvious, but cache locality and network locality are often in tension. An edge that is 5 ms closer but cold can still lose badly to one that is slightly farther and already holding the object or sharing an efficient shield path.

Before the cache lookup, the edge usually normalizes parts of the request that explode cardinality without changing the response. Common examples are irrelevant query parameters, inconsistent host casing, and headers that should not participate in the key. This is where many teams accidentally sabotage their own CDN architecture. A signed URL rollout or analytics parameter can cut hit ratio hard enough to look like an origin incident.

The edge computes a key from the selected dimensions: host, path, normalized query, possibly device class, language, or encoding. If the object is fresh, it is served immediately. If it is stale but revalidatable, the edge can issue a conditional request upstream using ETag or Last-Modified. If the object is absent or marked pass, the miss path starts.

On a clean hit, the edge serves bytes locally, often with an Age header or a provider-specific cache-status signal. Latency is now dominated by edge RTT, edge queueing, and transport state. This is the fastest and cheapest path, which is why the real art of how CDN works is key design and cacheability discipline, not simply standing up edge capacity.

If freshness has expired but the object is still revalidatable, the edge asks upstream whether its copy is still good. A 304 response is much cheaper than refetching the object body, but it is not free. Revalidation still consumes origin or shield concurrency, burns upstream RTT, and can become a storm if many edges age out the same object together.

On a miss, high-quality implementations collapse concurrent requests for the same key so one upstream fetch populates multiple waiting clients. Without collapsed forwarding, ten thousand requests arriving within milliseconds can create ten thousand origin fetches for the same object. With collapse, they become one fetch plus fan-out from cache fill. For launch-day traffic and popular video segments, this is one of the most important differences between competent and naive CDN behavior.

The upstream request may go directly to origin or first to a shield tier. Shielding reduces duplicate origin fetches across many edges, but it changes the miss path from edge-to-origin into edge-to-shield-to-origin. That is good when the shield hit ratio is high and bad when the shield is cold, overloaded, or placed behind poor routing. Shield is a capacity and collapse tool first. It is not free latency.

While the object is fetched, the CDN may stream bytes to the client before the full object is stored. Large objects often use partial fill logic so the first client does not wait for the entire body to land on disk. Metadata such as TTL, surrogate keys, validation tokens, and object size are written alongside the body. For range-heavy workloads, the cache may store whole-object, slice-aligned, or sparse-range state depending on product design.

After delivery, logs and counters feed control-plane decisions: hot-object replication, tiering, invalidation, and anti-pollution heuristics. If you are tracing how CDN caching works with origin servers, this is the part to instrument hard. Edge-hit ratio, shield-hit ratio, 304 rate, collapsed-request count, and upstream fetch latency are more actionably diagnostic than a single global hit-rate number.

| Request state | Upstream work | Latency shape | Operational significance |

|---|---|---|---|

| Fresh hit | None | Edge RTT plus local service time | Best case for both user and origin |

| Stale revalidate | Conditional upstream check | Usually lower than full miss, higher than hit | Common hidden source of origin load |

| Miss with collapse | One upstream fetch for many requests | First waiter pays most | Critical under burst traffic |

| Pass or bypass | Every request goes upstream | Origin RTT dominates | Expected for personalized or unsafe content |

A solid CDN architecture has at least five cooperating layers: request mapping, edge cache, shield or mid-tier cache, origin interface, and control-plane telemetry. The important question is not whether these components exist. It is where consistency, collapse, and key normalization happen, and what you can observe when they go wrong.

| Layer | Primary job | What usually breaks first |

|---|---|---|

| Mapping | Steer client to a good serving edge | Suboptimal locality, hot spots, cold-cache bias |

| Edge cache | Serve hot objects with low RTT | Key explosion, disk churn, partial-object inefficiency |

| Shield tier | Consolidate misses and protect origin | Purge bursts, low shield locality, long miss chains |

| Origin interface | Fetch, revalidate, enforce timeouts and retries | Connection storms, slow 304 paths, weak timeouts |

| Control plane | Invalidation, config rollout, telemetry | Delayed purge, inconsistent policy, poor visibility |

Why this design over simpler alternatives? Because uncached traffic is not the only problem. The hard problem is preserving the fast path while preventing coordinated expiry, key fragmentation, and request fan-out from turning nominally cacheable traffic into an origin event. A CDN that only optimizes the hit path looks great in demos and ugly on incident review timelines.

This is also where provider economics matter. For teams delivering software artifacts, video, and other cache-friendly bytes at large scale, BlazingCDN's CDN comparison is worth reviewing if you need stability and fault tolerance comparable to Amazon CloudFront while staying materially more cost-effective. The platform is positioned for flexible configuration and fast scaling under demand spikes, advertises 100% uptime, and starts at $4 per TB, or $0.004 per GB, which changes the math quickly for enterprises and large corporate clients moving serious volume.

You do not need a synthetic benchmarking lab to validate the request flow. You need timing splits, response headers, and one controlled impairment profile. The procedure below is simple, repeatable, and good enough to tell whether you are getting hits, 304-heavy revalidation, or silent bypass behavior.

origin=https://origin.example.com/assets/app.js

edge=https://cdn.example.com/assets/app.js

curl -sS -o /dev/null -D headers.origin \

-w 'origin dns=%time_namelookup connect=%time_connect tls=%time_appconnect ttfb=%time_starttransfer total=%time_total size=%size_download\n' \

"$origin"

curl -sS -o /dev/null -D headers.edge.1 \

-w 'edge_1 dns=%time_namelookup connect=%time_connect tls=%time_appconnect ttfb=%time_starttransfer total=%time_total size=%size_download\n' \

"$edge"

curl -sS -o /dev/null -D headers.edge.2 \

-w 'edge_2 dns=%time_namelookup connect=%time_connect tls=%time_appconnect ttfb=%time_starttransfer total=%time_total size=%size_download\n' \

"$edge"

etag=$(grep -i '^etag:' headers.edge.2 | cut -d' ' -f2- | tr -d '\r')

curl -sS -o /dev/null -D headers.reval \

-H "If-None-Match: $etag" \

-w 'reval code=%http_code ttfb=%time_starttransfer total=%time_total\n' \

"$edge"

curl -sS -o /dev/null -D headers.range \

-H 'Range: bytes=0-1048575' \

-w 'range code=%http_code size=%size_download ttfb=%time_starttransfer total=%time_total\n' \

"$edge"

sudo tc qdisc replace dev eth0 root netem delay 140ms 20ms loss 0.5%

curl -sS -o /dev/null \

-w 'origin_impaired ttfb=%time_starttransfer total=%time_total\n' \

"$origin"

curl -sS -o /dev/null \

-w 'edge_impaired ttfb=%time_starttransfer total=%time_total\n' \

"$edge"

sudo tc qdisc del dev eth0 rootWhat to look for:

For video and large-file delivery, also measure 206 share, average segment TTFB, and shield revalidation rate. Engineers often validate only 200 responses and miss the fact that their workload is dominated by byte ranges or stale checks, not full-object hits.

This is where content delivery network explained pieces usually stop being useful. A CDN improves the fast path, but it also introduces distributed state, eviction behavior, invalidation lag, and new classes of cache correctness bugs.

If responses vary on cookies, auth state, or high-cardinality query parameters, the cache key explodes. Teams often discover this indirectly after a marketing or auth rollout when hit ratio falls, origin egress rises, and no one changed TTL. The path to a fix is usually key normalization and separating truly personalized responses from assets that inherited uncacheable headers by accident.

A 304 is cheaper than a 200, but a fleet of 304s can still pin origin connections and saturate shield. Short max-age values plus synchronized expiry create a neat graph of “cache correctness” and a messy graph of origin concurrency. If you only watch hit ratio, you miss the difference between serving fresh hits and asking origin for permission every few seconds.

Video files, software packages, and installer images stress slice caching, range assembly, disk IOPS, and object eviction policy. A single 10 GB object can evict a large set of small hot objects if your cache is not segmented or admission-controlled carefully. Range-heavy traffic also makes request hit ratio especially misleading, because one logical object may produce many upstream interactions.

Shield tiers lower origin fan-out, but they lengthen the path for every miss and revalidation. Put shielding in the wrong place or size it for average load instead of burst concurrency, and it becomes a queueing layer that hides origin health until a purge or launch event. Engineers sometimes misread this as an “edge issue” because client-facing RTT rises while origin dashboards still look calm.

Fast purge sounds binary, but the operational reality is propagation delay, race windows, and partial visibility. If you mutate frequently and rely on purge instead of immutable object versioning, you have to reason about stale reads during rollout. Surrogate keys help, but they also concentrate risk when one key maps to a very large object set.

The standard misses are predictable: no edge versus shield breakdown, no view of collapsed requests, no distinction between pass and miss, and no per-status byte hit ratio. Without those, incident response becomes guesswork. A service can report a respectable overall cache rate while p95 is driven by a small class of pass requests or a noisy burst of 304 revalidation traffic.

This model fits workloads with real byte reuse: software downloads, package repositories, video segments, model artifacts, static site assets, public API GET responses with sensible cache keys, and enterprise web properties with global audiences. It also fits teams that can own response headers, cache-key policy, and invalidation discipline. If you can make content immutable or at least revalidatable, the economics and latency gains are straightforward.

It fits less well when most responses are personalized, write-heavy, or require strict read-after-write consistency at the edge. Think session-bound HTML, user-specific API responses, or workloads with long-tail objects that are never requested twice before eviction. In those cases, a CDN is still useful for connection termination, transport optimization, and selective cache of static subresources, but the edge will not rescue a fundamentally uncacheable application design.

Budget and team shape matter too. If your traffic is modest and regional, the win may be mostly operational simplicity and origin protection. If you are delivering petabytes, cost per GB and miss behavior become first-order architecture concerns. That is why cost-effective platforms with flexible controls matter more to large enterprises than glossy feature matrices do.

This week, pick one hot object class and one historically annoying object class. Measure direct-origin versus CDN TTFB under clean network conditions and again with 140 ms delay plus 0.5 percent loss. Collect edge-hit ratio, byte hit ratio, revalidation rate, collapsed-request count, and origin fetch latency for each. If you cannot answer which of those metrics moved first during your last spike, you do not yet have enough visibility into how CDN works in your stack.

The sharper question for discussion is not whether your CDN is “fast.” It is whether your cache key, expiry policy, and revalidation strategy keep the miss path from becoming your hidden production control plane. That is the benchmark that separates a CDN deployment from an architecture.

Learn

Best CDN for Video Streaming in 2026: 7 Providers Compared A single rebuffer event at the two-second mark costs you 8% ...

Learn

Video CDN Providers Compared: BlazingCDN vs Cloudflare vs Akamai for OTT If you are choosing a video CDN for an OTT ...

Learn

Video CDN Pricing Explained: How to Stop Overpaying for Streaming Bandwidth Video already accounts for 38% of total ...