Learn

Best CDN for Video Streaming in 2026: Full Comparison with Real Performance Data

Best CDN for Video Streaming in 2026: Full Comparison with Real Performance Data If you are choosing the best CDN for ...

A single-stream PUT to Wasabi's us-east-1 region from a Frankfurt origin averages 38–45 MB/s in Q2 2026 measurements. That is perfectly adequate for archival workloads. It is not adequate when you need to backfill 14 TB of VOD assets before a weekend launch, or when your users in São Paulo stare at a spinner because every byte travels through US or EU storage. Wasabi cloud storage performance is bounded by physics, by the S3-compatible protocol layer, and by a few configuration defaults that almost everyone leaves untouched. This article gives you 11 concrete techniques — from client-side tuning you can apply in minutes to CDN-layer architecture that changes your delivery profile entirely — along with a diagnostic playbook for isolating which bottleneck is actually yours.

Wasabi prices storage at $6.99/TB/month (as of May 2026) with no egress fees on reasonable-use workloads. That pricing model means Wasabi optimizes for capacity economics, not per-request latency. Three things work against you by default:

Before tuning anything, quantify the problem. Run a controlled Wasabi speed test from the same network and region your production workload uses. Generate a 1 GB test object locally, then upload it with your current client settings and record wall-clock time, CPU utilization, and network saturation. Repeat with a 10 MB object to isolate small-file overhead. Record TTFB on a GET for the same objects. These four numbers — large-object PUT throughput, small-file PUT latency, GET TTFB, and GET throughput — define your baseline. Every optimization below targets at least one of them.

For objects above 500 MB, increasing the part size from the default 8 MB to 64 or 128 MB reduces the number of round trips by 8–16×. Each part completion requires a server acknowledgment, so fewer parts means less idle time waiting. On a 1 Gbps link with 20 ms RTT to us-east-1, this single change typically lifts throughput from ~40 MB/s to ~90 MB/s for large objects.

Wasabi does not document a per-connection throttle, but empirical 2026 testing shows diminishing returns beyond 32 concurrent streams per bucket. Start at 16 threads and increment. Monitor for HTTP 503 SlowDown responses — if you see them, back off by 25%.

Wasabi added ap-southeast-2 (Sydney) and eu-south-1 (Milan) in late 2025. If you were routing APAC traffic to ap-northeast-1 (Tokyo) or EU traffic to eu-central-1 (Amsterdam), re-evaluate. A 40 ms RTT reduction on a 32-thread upload of a 10 GB file saves roughly 12 seconds of aggregate wait time.

If your upload origin runs Linux 5.x+, switching the congestion control algorithm from CUBIC to BBR typically improves throughput on lossy or high-RTT paths by 15–30%. This is a sysctl change, not an application change, and it affects all outbound connections to Wasabi.

TLS 1.3 handshake costs ~1 RTT. Over thousands of small-file PUTs, connection churn becomes the dominant cost. Configure your S3 client to maintain a persistent connection pool. In the AWS SDK, set the maximum connections parameter to match your concurrency target and disable connection idle timeout below 30 seconds.

Wasabi charges by stored bytes. For compressible payloads (logs, JSON, CSV), gzip or zstd compression before upload reduces both transfer time and storage cost. A 4:1 compression ratio on a 20 GB log archive turns a 3-minute upload into a 45-second one. Store the Content-Encoding metadata so downstream consumers can decompress transparently.

Wasabi does not offer a native transfer acceleration feature like AWS S3. The workaround: route uploads through a VPS or relay node colocated in the same data center as your Wasabi region. Hetzner, Vultr, and OVH all have presence in Ashburn, Amsterdam, and Tokyo — the same metros as Wasabi's primary regions. Upload from your origin to the relay over the public internet, then relay-to-Wasabi traverses a local or peered path with sub-2 ms RTT.

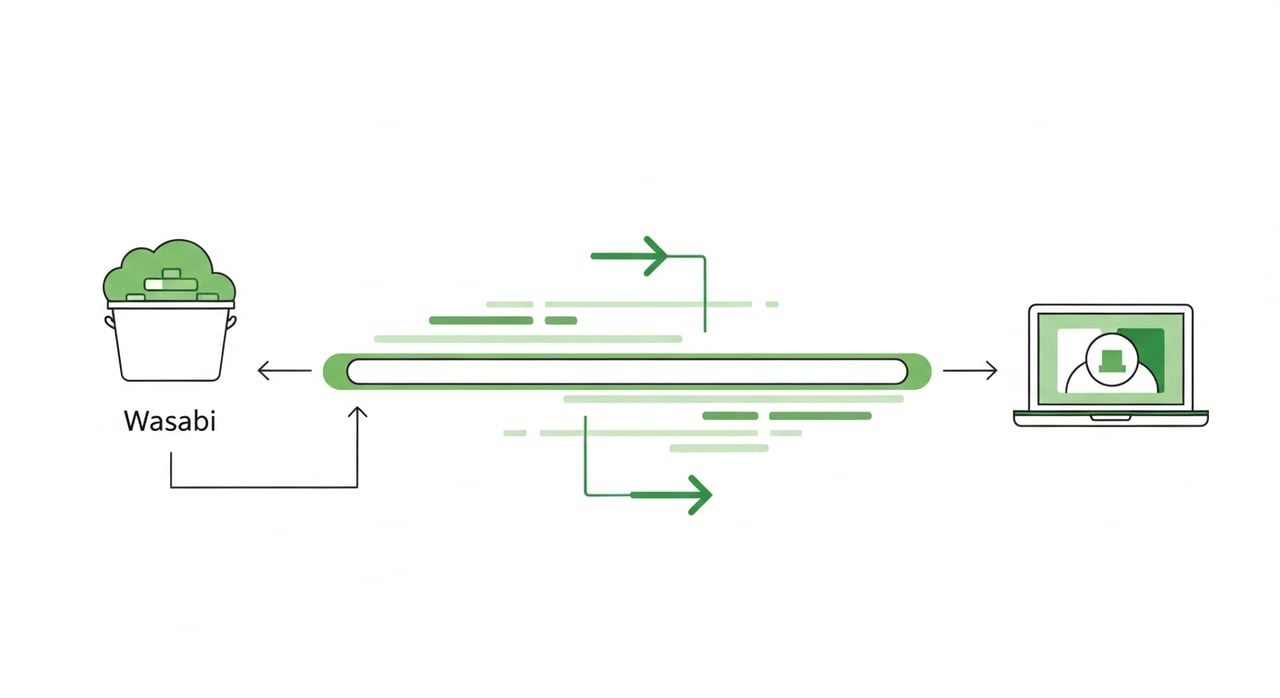

This is the highest-impact change for delivery performance. Wasabi stores; it was never designed to serve at edge speed. Placing a CDN pull zone in front of your Wasabi bucket means the first request for an object is the only one that hits the origin. Every subsequent request serves from edge cache with sub-20 ms TTFB in most metros.

For teams running media delivery, software distribution, or SaaS asset pipelines at scale, BlazingCDN's volume-based pricing pairs particularly well with Wasabi's free-egress model. BlazingCDN delivers fault tolerance and uptime on par with Amazon CloudFront at a fraction of the cost — starting at $4/TB for lower volumes and scaling down to $2/TB at 2 PB+ commitments. For an organization delivering 100 TB/month from Wasabi, the combined storage-plus-delivery cost comes to roughly $700 + $350 = $1,050/month. Try matching that on S3 + CloudFront.

When a cache-cold object gets requested from 30 edge locations simultaneously, 30 GET requests hit your Wasabi bucket. Origin Shield collapses those into a single origin fetch. This matters for launch-day traffic patterns and viral content distribution where cache fill storms can saturate Wasabi's per-bucket throughput.

Wasabi lets you set Cache-Control metadata per object on upload. For immutable assets (versioned JS bundles, video segments, firmware binaries), set max-age to 31536000 (one year) and mark as immutable. For mutable assets, use shorter TTLs with stale-while-revalidate to avoid thundering-herd revalidation. The CDN respects these headers; the quality of your cache policy directly determines your cache-hit ratio and therefore your effective Wasabi egress volume.

If your users span three or more continents, a single-region Wasabi bucket behind a single CDN origin creates an asymmetric latency profile. Replicate critical assets to a second Wasabi region (e.g., eu-central-1 and ap-northeast-1) and configure your CDN with geo-aware origin selection. CDN edge in Frankfurt pulls from Amsterdam; edge in Tokyo pulls from Tokyo. This shaves 80–150 ms off origin-fetch latency for cache misses, which matters most for long-tail content with low hit rates.

Tuning without observability is guesswork. Here is a minimal diagnostic sequence to validate each change:

| Symptom | Diagnostic | Likely Fix (from above) |

|---|---|---|

| Large uploads under 50 MB/s on >1 Gbps link | Check thread count and part size; confirm BBR is active | #1, #2, #4 |

| Small-file PUTs >200 ms each | Trace TLS handshake count; check connection reuse | #5 |

| GET TTFB >300 ms for repeat requests | Verify CDN cache-hit header; check Cache-Control on object | #8, #10 |

| 503 SlowDown errors during bulk operations | Reduce concurrency; implement exponential backoff | #2 (reduce), #9 |

| High TTFB for users in APAC/LATAM | Measure RTT to origin region; check if closer region exists | #3, #11 |

Rollback is straightforward: each technique is independent. If raising concurrency triggers 503s, drop threads without touching part size. If BBR performs worse on a specific path (rare, but possible on very low-loss links), revert to CUBIC. Keep your baseline numbers and compare after each change in isolation.

Generate synthetic objects of known sizes (10 MB, 1 GB, 10 GB), upload and download them from the same network path your production workload uses, and record wall-clock time. Run each test at least three times at different hours to account for time-of-day variance. Use curl timing output or your SDK's built-in metrics, not browser-based tools.

Any CDN that supports S3-compatible origins works. The selection criteria that matter are pull-zone origin-shield support, per-TB pricing at your volume tier, and edge presence in the regions where your users concentrate. BlazingCDN, Bunny, and CloudFront all integrate cleanly with Wasabi's endpoint format.

As of May 2026, Wasabi's free-egress policy applies as long as monthly egress does not exceed your active storage volume. If you store 100 TB and deliver 80 TB/month through a CDN, you pay zero egress. Exceeding the storage-to-egress ratio triggers per-GB charges — read the current Wasabi pricing FAQ for exact thresholds.

The most common root cause is low concurrency combined with small multipart part sizes. A 10 Gbps link cannot be saturated by 4 threads uploading 8 MB parts because the per-thread throughput is round-trip-limited. Increasing to 16–32 threads with 64 MB parts typically resolves this immediately.

Technically yes, but you should not. Wasabi's per-request latency and lack of edge presence make it unsuitable for live or low-latency VOD delivery. Use Wasabi as your canonical storage layer and front it with a CDN. The CDN handles delivery; Wasabi handles durability and cost-efficient retention.

Pick one: measure your current cache-hit ratio on Wasabi-originated assets, or run a controlled upload benchmark with 8 MB vs. 64 MB parts and 4 vs. 16 threads. Post the before-and-after numbers. The delta will tell you whether your bottleneck is on the ingest side or the delivery side — and that determines which of these 11 techniques to prioritize first.

Learn

Best CDN for Video Streaming in 2026: Full Comparison with Real Performance Data If you are choosing the best CDN for ...

Learn

Video CDN Providers Compared: BlazingCDN vs Cloudflare vs Akamai for OTT If you are choosing a video CDN for an OTT ...

Learn

Video CDN Pricing Explained: How to Stop Overpaying for Streaming Bandwidth Video already accounts for 38% of total ...