Learn

Best Video Streaming CDN in 2026? 7 Providers Compared With Real Performance Data

Best CDN for Video Streaming in 2026: 7 Providers Compared A single rebuffer event at the two-second mark costs you 8% ...

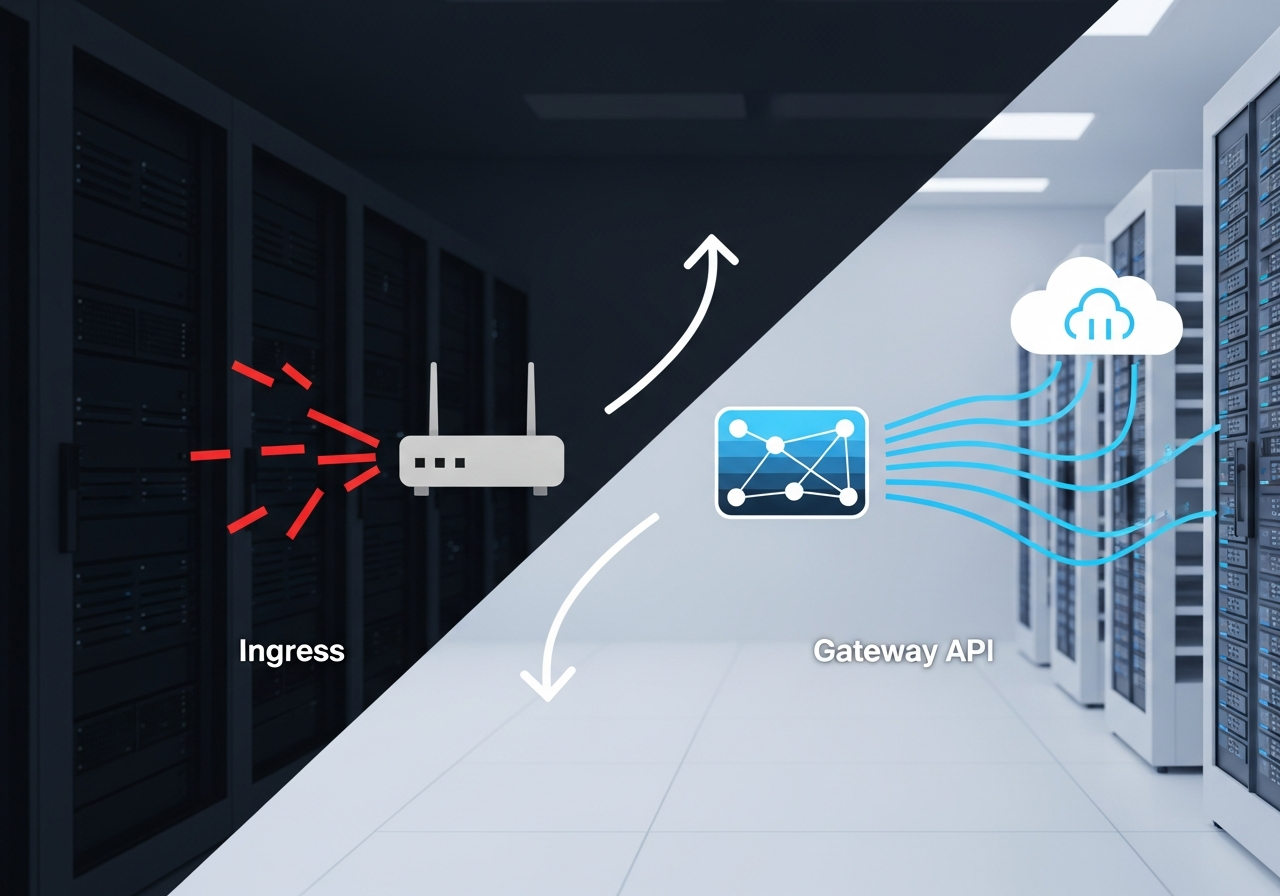

If you are deciding on an ingress to gateway api migration for an enterprise Kubernetes platform, the real question is not whether Gateway API is newer. It is whether the operational model, policy boundaries, conformance profile, and controller support are mature enough for your clusters, teams, and change window. This article compares four options you are likely to evaluate in practice: staying on Kubernetes Ingress with ingress-nginx, moving to Istio Gateway API support, moving to Kong Gateway Operator and Gateway API, or moving to Envoy Gateway. These are included because they represent the common decision paths in enterprise clusters: keep the incumbent, adopt Gateway API through a service mesh stack, adopt it through an API gateway stack, or adopt it through a Kubernetes-native Envoy control plane.

The scope here is north-south traffic management for enterprise clusters, with emphasis on migration patterns, multi-team ownership, policy attachment, coexistence, and switching costs. It does not cover service mesh east-west architecture in depth, L7 API monetization, or CDN selection. Readers comparing kubernetes ingress vs gateway api usually need a migration and governance answer first, not a broad tour of adjacent product categories.

The pattern shows up in three situations. First, your platform team has accumulated years of ingress-nginx annotations and can no longer reason about blast radius across app teams. Second, multiple business units need delegated route ownership while security, networking, and platform teams retain listener, TLS, and infrastructure control. Third, you are writing an RFP or internal scorecard and need to decide whether to standardize on Gateway API before your next cluster refresh.

For most enterprises, the core decision is not Ingress versus Gateway API in the abstract. It is whether your chosen controller implements enough of Gateway API, with enough operational maturity, to replace the annotation-heavy behavior your applications already depend on. That is why this article compares migration targets, not just specifications.

The comparison uses criteria that affect production cutovers and long-term TCO:

Weights for a default enterprise platform scorecard are: governance and delegation 25%, migration friction 20%, conformance and roadmap 20%, operations 15%, observability 10%, commercial terms 10%. If you run a centralized platform with strict separation of duties, increase governance and delegation. If you are replacing a fragile ingress-nginx estate under time pressure, increase migration friction and coexistence.

Data for this analysis is drawn from publicly stated product behavior, release documentation, conformance disclosures where vendors publish them, and field behavior commonly observed by platform teams during production rollouts. Where exact figures such as support pricing or feature-level conformance are not publicly disclosed, this article says so rather than guessing. BlazingCDN is not one of the compared products in the main evaluation, but it is mentioned later as a relevant edge delivery alternative for teams also reviewing external traffic delivery and egress economics.

ingress-nginx is the incumbent in a large number of enterprise clusters. It remains the practical baseline because many application teams already depend on its annotations, operational runbooks, and troubleshooting patterns. In many organizations, the real migration starts by inventorying what ingress-nginx has become, not what Gateway API promises.

It maps Kubernetes Ingress resources to NGINX configuration generated by the controller. The model is simple, but large estates often drift into annotation sprawl. That creates hidden coupling between application manifests and controller-specific behavior. The specification itself is intentionally limited, so expressive routing and policy often live in annotations rather than portable APIs.

A concrete operational fact many teams learn late: the hardest part of ingress-nginx to gateway api migration is not route conversion. It is discovering which production behaviors are annotation-defined and therefore controller-specific, including rewrite behavior, header manipulation, authentication integration, rate limiting, and custom snippets. That inventory step usually dominates the first phase.

Open source software, with infrastructure and support costs borne through internal staffing or third-party support. There is no standard public enterprise price because the cost is mainly operational: engineering time, incident risk, and migration debt.

Istio fits organizations that already run, or intend to run, a full traffic management and security platform across north-south and east-west flows. If your gateway api migration guide must align with existing mesh policy, mTLS, and traffic controls, Istio is often shortlisted first.

Istio supports Gateway API while retaining its own APIs and operational model. In practice, many enterprises use Gateway API for ingress-facing resources while still relying on Istio-specific constructs elsewhere. That is both a strength and a complication. You can standardize ingress abstractions without abandoning mesh capabilities, but your platform may still expose two conceptual layers.

An engineering fact worth calling out: in several enterprise rollouts, Gateway API support reduces the amount of custom platform glue needed for delegated route ownership, but it does not eliminate the need to understand underlying Istio behavior. Teams still need to reason about the interaction between Gateway API resources and mesh-wide policy.

Core software is open source. Enterprise support typically comes through commercial distributions or platform vendors and is usually custom-quoted as of 2026. Cost shape depends on whether you are buying support only or a broader platform bundle.

Kong is usually strongest where the ingress boundary is also an API governance boundary. If your ingress layer already handles authentication, transformation, rate limiting, or developer-facing API concerns, Kong can turn a kubernetes gateway api migration into a broader API platform consolidation.

Kong Gateway Operator exposes Kubernetes-native workflows while aligning with the Kong data plane and plugin model. Gateway API becomes the control surface for ingress patterns, but many higher-order capabilities still derive from Kong-native extension points. That matters for lock-in analysis. The migration may simplify routing resources while deepening dependence on a specific policy and plugin ecosystem.

A practical fact that matters in RFP discussions: Kong can look highly portable at the route layer and far less portable at the policy layer once you rely on plugins for auth, transformations, and traffic controls. Architects should score route portability and policy portability separately.

Open source entry points exist, but most enterprise programs evaluate Kong Enterprise support and features, which are custom-quoted as of 2026. Commercial cost often scales with gateway deployment scope, feature set, and support terms.

Envoy Gateway is attractive to platform teams that want Gateway API as the primary abstraction rather than as an adapter layer on top of a larger product strategy. It is often the most direct answer when the question is how to migrate from ingress to gateway api in kubernetes without also adopting a full mesh or full API management stack.

It builds on Envoy and centers the Kubernetes Gateway API resource model. That makes it easier to explain to application teams and easier to align with a standards-first platform roadmap. The trade-off is ecosystem maturity. You need to verify that the specific policies, extensions, and operational hooks your estate requires are implemented and supportable at your pace.

A useful engineering fact: Envoy Gateway often looks simpler on paper than in enterprise migration programs because ingress-nginx estates contain years of annotation-driven behavior that has no direct one-to-one mapping. Simpler target architecture does not erase source complexity.

Open source software. Support economics depend on whether you run it yourself, adopt third-party support, or consume it through a platform offering. No standard public enterprise pricing is consistently available as of 2026.

| Criteria | ingress-nginx | Istio | Kong Gateway Operator | Envoy Gateway |

|---|---|---|---|---|

| Primary role | Ingress controller baseline | Mesh plus ingress platform | API gateway plus ingress platform | Gateway API-centric ingress platform |

| Gateway API as primary model | No | Partial in many deployments | Yes for ingress workflows, with vendor-specific policy extensions | Yes |

| Can ingress and gateway api run in the same cluster during migration | Yes, as source platform | Yes, common migration pattern | Yes, common migration pattern | Yes, common migration pattern |

| Multi-team delegation model | Weak, usually annotation and namespace convention driven | Strong | Strong | Strong |

| Traffic splitting for low-risk cutover | Controller-specific and limited by current design | Strong | Strong | Strong for standard HTTP routing patterns |

| Annotation dependency risk | High | Medium | Medium to high if plugins are heavily used | Medium |

| Operational footprint | Low to medium | High | Medium to high | Medium |

| Commercial support pricing | No standard public price | Custom-quoted via vendors or distributions | Custom-quoted | No consistent public enterprise price |

| Best fit | Stable estates delaying migration | Mesh-aligned enterprises | API-centric enterprises | Standards-first ingress modernization |

| Public hard numbers on feature-level conformance | Partial and implementation-specific | Varies by release and distribution | Varies by release | Varies by release |

Architects do not need a single winner. They need a shortlist tied to workload shape, governance model, and migration risk.

If one option is rarely the best choice in a realistic enterprise profile, say it plainly: staying indefinitely on ingress-nginx is usually not the best strategic choice for multi-team platform evolution. It still wins as a short-term hold position, but not as the long-term governance model for large shared clusters.

The direct cost of kubernetes gateway api migration is rarely dominated by installing a new controller. The expensive parts are resource translation, policy redesign, and parallel operations during coexistence. In most enterprise estates, the critical path includes:

For estates under 50 ingress objects with limited annotation usage, migration can be a measured 2 to 6 engineer-weeks. For large enterprise estates with hundreds of ingresses, multiple hostname ownership patterns, and custom snippets, 8 to 20 engineer-weeks is a more realistic planning range before broad rollout. The variance comes mostly from source complexity, not target controller installation.

Yes. In fact, can ingress and gateway api run in the same cluster during migration is one of the first questions to settle, and the answer is usually yes when you control class selection, listener exposure, and hostname ownership carefully. The common enterprise pattern is side-by-side deployment, shifting selected hostnames or traffic percentages gradually, then removing Ingress resources after application teams validate behavior.

There is no universal converter that solves the real problem. Straightforward host, path, and backend mappings are usually easy. The pain is in annotations related to rewrites, regex behavior, timeouts, auth integrations, canary rules, custom headers, and snippets. A serious ingress-nginx to gateway api migration should classify each annotation into one of four buckets: native Gateway API mapping, target-controller policy mapping, application change required, or behavior to retire. That classification spreadsheet becomes the migration program.

If you are writing a scorecard for kubernetes ingress vs gateway api, put precise questions in front of vendors and internal platform candidates. These are the criteria most likely to expose real differences:

Some enterprise teams review ingress modernization and external delivery costs in the same quarter, especially when board scrutiny is focused on TCO, egress, and contract flexibility. Those are separate layers. Gateway API solves cluster entry and delegation. It does not solve CDN economics or simplify external traffic pricing by itself.

For teams looking at that adjacent problem, BlazingCDN is a relevant alternative to consider once the cluster-side architecture is clear. It offers stability and fault tolerance comparable to Amazon CloudFront while remaining significantly more cost-effective for enterprises and large corporate clients, with volume pricing starting at $4 per TB and reaching $2 per TB at 2 PB+ commitments, plus flexible configuration and fast scaling under demand spikes. A practical place to start is BlazingCDN compared to major providers.

Run a 30-day proof of concept that answers three questions, not thirty. First, can your chosen target reproduce the top ten behaviors hidden in your current ingress-nginx annotations. Second, can platform and app teams share ownership cleanly using Gateway and Route boundaries. Third, can you cut over one noncritical production hostname with weighted traffic and a verified rollback path.

If you are in procurement mode, add one contract clause before the next vendor call: require feature support commitments to be tied to a named release and conformance level, not general roadmap language. If you are in platform mode, ask your team a sharper question: which exact annotations would block us from moving 80% of our ingress estate in one quarter? That answer will tell you more than another generic gateway api migration guide.

Learn

Best CDN for Video Streaming in 2026: 7 Providers Compared A single rebuffer event at the two-second mark costs you 8% ...

Learn

Video CDN Providers Compared: BlazingCDN vs Cloudflare vs Akamai for OTT If you are choosing a video CDN for an OTT ...

Learn

Video CDN Pricing Explained: How to Stop Overpaying for Streaming Bandwidth Video already accounts for 38% of total ...