Learn

Best CDN for Video Streaming in 2026: Full Comparison with Real Performance Data

Best CDN for Video Streaming in 2026: Full Comparison with Real Performance Data If you are choosing the best CDN for ...

In March 2026, an AWS customer running 14 million daily requests through CloudFront discovered that a single misconfigured S3 website redirect rule silently returned 307 Temporary Redirects to 9% of their traffic for 11 hours before anyone noticed. The origin was swapped, the redirect was supposed to be permanent, and the cache was happily serving stale 307s because nobody invalidated the right path patterns. That one mistake cost them indexed URLs, a measurable organic traffic dip, and two SRE on-call escalations. If you are planning a cloudfront s3 redirect migration in 2026, this playbook gives you the decision matrix, the layer-by-layer execution sequence, and the rollback diagnostics to avoid exactly that scenario.

The failure mode has shifted. In 2024, most incidents traced back to DNS propagation lag or missing OAC policies. As of Q1 2026, the dominant cause is layer confusion: teams apply redirect logic at the wrong tier of the stack, or at multiple tiers simultaneously, producing redirect chains that inflate TTFB by 200–400 ms and confuse crawlers. CloudFront now supports three distinct redirect surfaces — S3 static website hosting rules, CloudFront Functions (viewer request/response), and Lambda@Edge (origin request/response) — and each behaves differently with respect to caching, header propagation, and HTTP status code fidelity.

The second emerging pattern is continuous deployment misuse. AWS GA'd CloudFront continuous deployment with staging distributions in late 2024 and iterated on the traffic-splitting controls through 2025. In 2026, teams are using it for redirect rollouts but treating the staging distribution as a full canary — it is not. The staging distribution shares the same alternate domain names (CNAMEs), which means a redirect rule mismatch between staging and production can surface intermittently to real users in ways that defy simple percentage-based reasoning.

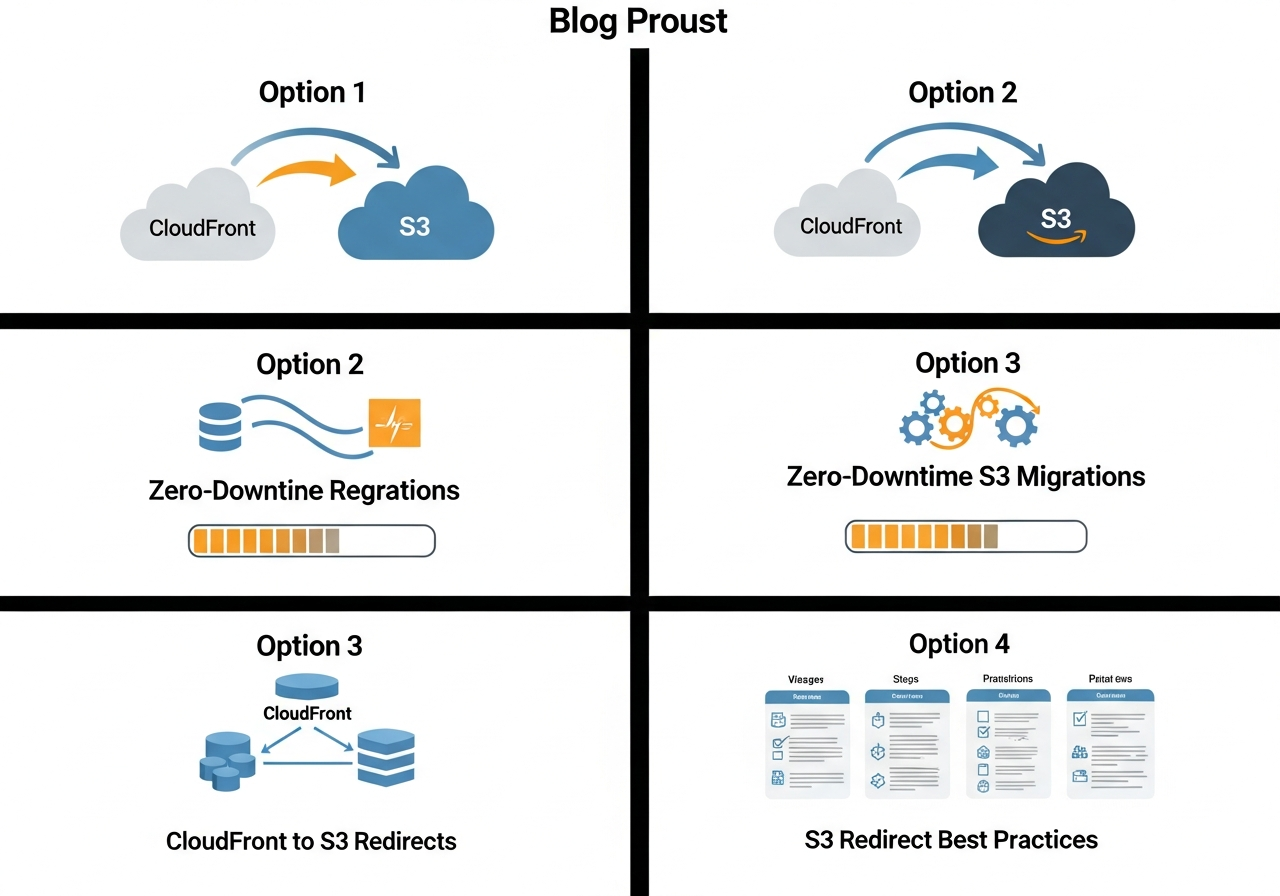

This is the section missing from every other guide on the topic. Where you place redirect logic determines latency overhead, cache interaction, programmability ceiling, and cost per invocation. Use the matrix below against your actual workload profile.

| Redirect Surface | Latency Overhead | Cacheable | Dynamic Logic | Cost at 100M req/mo | Best For |

|---|---|---|---|---|---|

| S3 Website Redirect Rules | ~0 ms (origin-side) | Yes, via CloudFront | Prefix-only, no query string logic | $0 (S3 request pricing only) | Simple bucket-to-bucket permanent moves |

| CloudFront Functions (viewer request) | Sub-1 ms | No (runs before cache) | JS subset, 10 KB limit, no network I/O | ~$10 (as of 2026 pricing: $0.10/million) | High-volume path rewrites, geo or header-based routing |

| Lambda@Edge (origin request) | 5–50 ms cold start, sub-5 ms warm | Yes (response cached) | Full Node.js/Python, network I/O, 30 s timeout | ~$60–$150 depending on duration | DB-lookup redirects, complex rewrite maps, A/B split |

The critical subtlety: S3 website redirect rules require your CloudFront origin to point at the S3 website endpoint (bucket-name.s3-website-region.amazonaws.com), not the REST API endpoint. If you use the REST endpoint with OAC, S3 redirect rules are silently ignored. This single misconfiguration accounts for a large share of "my cloudfront redirect to s3 bucket isn't working" reports.

Before touching any configuration, snapshot your current state. Pull CloudFront access logs for the past 30 days and extract every unique URI path, the origin it resolved to, and the HTTP status code returned. You need this as a regression test. If you have more than 10,000 unique paths, group by prefix and status code — you are looking for existing 3xx responses that your new redirect rules might conflict with.

Use the decision matrix above. For most migrations where you are moving content from one S3 bucket to another behind the same CloudFront distribution, CloudFront Functions on viewer-request is the correct choice in 2026. It executes before the cache check, which means every request sees the redirect immediately without waiting for a cache invalidation. The 10 KB code-size limit is sufficient for redirect maps of up to roughly 300 path rules when minified.

If your redirect map exceeds that, or if you need to query DynamoDB or an external service to resolve the target, Lambda@Edge on origin-request is the answer. Place it on origin-request (not viewer-request) so that the redirect response itself gets cached — you pay for the Lambda invocation once per cache miss, not once per viewer request.

Create a staging distribution using CloudFront continuous deployment. Attach your CloudFront Function or Lambda@Edge association to the staging distribution only. Set the traffic weight to 1% and monitor for 24 hours. Watch three signals: the 3xx response ratio in CloudFront standard logs, the error rate (4xx/5xx) from the new origin, and cache hit ratio delta versus the production distribution. If all three are within tolerance, ramp to 10%, then 50%, then promote.

One important constraint as of May 2026: the staging distribution inherits the production distribution's cache behaviors, but you can override function associations per cache behavior. You cannot, however, run different origin configurations between staging and production within the same continuous deployment policy. If your migration involves changing the origin (e.g., from bucket-A to bucket-B), you need to handle that through the redirect logic itself — the Function or Lambda returns a 301 pointing to the new bucket's domain — not through an origin swap on the staging distribution.

Use 301 for permanent moves. Full stop. A 302 tells caches and crawlers the redirect is temporary, which means they will keep requesting the old URL. For S3 website hosting rules, the redirect protocol, host, and HTTP status code are all configurable per rule — verify that the status code is explicitly set to 301 and not left at the default, which is 301 for routing rules but 302 for the top-level redirect on a bucket's website configuration.

This section does not exist in most migration guides, and its absence is why migrations fail at 2 AM.

If the staging distribution shows elevated 5xx or redirect loops, do not promote. Disable the continuous deployment policy, which instantly routes 100% of traffic back through the production distribution. If you have already promoted and the error is now in production, disassociate the Function/Lambda from the cache behavior. CloudFront will propagate the change globally within 2–5 minutes (as of 2026, propagation times have improved from the 15-minute averages seen in 2023). While waiting, push a cache invalidation on /* to clear any cached 301 responses pointing to the wrong destination.

Instrument three CloudWatch metrics for at least 7 days: 4xxErrorRate, 5xxErrorRate, and CacheHitRate at the distribution level. Set alarms at 1% for error rates and at a 5% drop in cache hit ratio. Additionally, run a crawl of your top 500 URLs through a redirect-chain checker within 48 hours — search engine crawlers may take days to revisit all paths, and you want to catch any lingering 302s or chains before they get indexed.

At 500 million requests per month — a common volume for media and SaaS companies serving static assets — the difference between CloudFront Functions and Lambda@Edge is meaningful. CloudFront Functions will cost approximately $50/month for the function invocations alone (2026 pricing). Lambda@Edge at origin-request, assuming a 90% cache hit ratio, runs against 50 million requests, costing roughly $30–$75/month depending on execution duration. The real cost savings come from getting the cache behavior right so that redirect responses themselves are cached and Lambda invocations drop proportionally.

For organizations running multi-CDN or evaluating alternatives during migration windows, BlazingCDN's CDN comparison is worth reviewing. BlazingCDN delivers fault tolerance and uptime on par with CloudFront while pricing at $4 per TB for standard volumes and as low as $2 per TB at 2 PB+. For enterprises moving large static asset libraries across origins, that pricing delta compounds fast — a 100 TB/month workload runs $350/month on BlazingCDN versus significantly more on CloudFront's standard tiers.

Yes, but only when CloudFront's origin is configured as the S3 website endpoint, not the REST API endpoint. If you use Origin Access Control (OAC), S3 website hosting is bypassed entirely and redirect rules will not fire. You must choose: OAC with programmatic redirects at the edge, or website endpoint with S3-native redirects and a public bucket policy.

CloudFront Functions for deterministic, map-based redirects where the logic fits in 10 KB of JavaScript and requires no network calls. Lambda@Edge for redirects that depend on external lookups, session state, or complex conditional logic. If your redirect map is static and under 300 rules, CloudFront Functions is faster and 5–10x cheaper per invocation as of 2026.

Use CloudFront continuous deployment to create a staging distribution, attach your redirect logic (Function or Lambda@Edge) to the staging distribution, route 1% of traffic through it, validate with access logs and CloudWatch error-rate metrics, then progressively ramp and promote. The production distribution continues serving 100% of traffic until you explicitly promote the staging config.

You cannot attach the same CNAME to two CloudFront distributions simultaneously. The approach is to use continuous deployment (which operates within a single distribution pair) for the redirect logic change, then update the CNAME in your DNS to point to the new distribution only after the old distribution's alternate domain name is removed. Lower your DNS TTL to 60 seconds at least 48 hours before the swap.

Yes. Browsers cache 301s aggressively, some indefinitely. If you issue a 301 and then need to reverse it, users who received the cached 301 will continue following it until their browser cache expires. Mitigate this by setting Cache-Control: max-age=3600 on your 301 responses during migration — short enough to recover from mistakes, long enough to avoid per-request origin hits. After the migration stabilizes, increase or remove the max-age.

Pull your CloudFront access logs from the last 7 days. Count how many distinct 3xx responses your distribution is already returning. If that number surprises you, you have pre-existing redirect debt that will compound during migration. Map every 3xx to its origin rule, Function, or Lambda association. Build that inventory before you write a single redirect rule. Then run the staging distribution at 1% for 24 hours and watch the three metrics — error rate, cache hit ratio, redirect chain depth. That is the only honest way to know if your cloudfront s3 redirect logic is production-ready.

Learn

Best CDN for Video Streaming in 2026: Full Comparison with Real Performance Data If you are choosing the best CDN for ...

Learn

Video CDN Providers Compared: BlazingCDN vs Cloudflare vs Akamai for OTT If you are choosing a video CDN for an OTT ...

Learn

Video CDN Pricing Explained: How to Stop Overpaying for Streaming Bandwidth Video already accounts for 38% of total ...